I spent a couple of days developing my own image to ascii converter API written in Golang as I will be needing it for a future project, and was not happy with the results of existing options. I thought I would share it here. It is free and open-source.

Try it out: asciiart.benngu.com

Or check out the github repository: nebbyJammin/asciiart

What's so special about it?

Here is a list of features/design choices I made during development:

- Support for colour

- Not all terminals support colour, and if they do, they may support different colour spaces.

- This library supports 0/3/4/8/24 bit colour.

- Edge detection

- A distinguishing feature of true ascii-art is that the actual shape/curvature of the characters are used to outline edges and shape.

- My tool aims to emulate that using some cool techniques, such as using a Sobel Kernel and looking for first and second derivative approximations for luminosity to detect when edges change colour

- High modularity and customisability

- Don't like how the ascii output looked? You can program your own logic to use different characters and emphasise different colours.

- High efficiency

- While the library does not utilise GPU hardware acceleration, I wanted the library to be still extremely efficient without going into Cgo territory (overkill for most applications)

- As a note, conversion of most images < 1080p resolution into a 100x50 ascii grid averages ~20ms

If this sounds cool, please check it out. The online tool at asciiart.benngu.com will remain completely free.

Technical Details

If you are curious about some of the technical workings of the ascii-art tool, I have tried to detail them as coherently as possible down below. The edge detection algorithm is quite involved.

The steps from taking an image, and outputting an ascii string look something like this:

- Downscale the image: Reduce the amount of computation we will need to do later on by using nearest neighbour sampling

- Calculate luminosity and cache it: It is important to cache the luminosity of each pixel, because we will be referring to it quite often

- Prepare Edge Detection: Sobel Magnitude, Gradient and Laplacian

- Don't worry about the big words, its a lot of numbers that are involved in the detection of edges

- Loop through each character, and generate the ascii character based on the parameters above

Step 1: Downscale the Image

We downscale the image to reduce computation time. Since we are dealing with ascii-art, we are already okay with losing a substantial amount of quality. We will always downscale to the target ascii size (or smaller).

This can sometimes be annoying though, because the default scaling mode respects the original image aspect ratio. As a result, the target image is often never the size stated. I have also included an option to scale directly to the target size, ignoring the aspect ratio of the original image. Using this option will often result in warped images, but I wanted to implement it as an option for convenience sake.

Step 2: Calculate and Cache Luminosity per Downscaled Pixel

Luminosity is an approximation for the brightness for a particular rgb pixel. If you are using 32 bit colour space (so 8 bits for each channel including alpha channel), a common approximation used is:

pseudocodeluminosity = 0.2126 * red + 0.7152 * green + 0.0722 * blue

This formula weighs green as the most luminous colour (we perceive green much more easily compared to red and blue), then red, then blue. This gives us a nice way to approximate perceived brightness, which we will use later to calculate the corresponding ascii character required at that position.

To take it one step further, I wanted full compatibility with .png format, which supports alpha channel. I have modified this common implementation of luminosity, and will just scale the output based on the alpha channel.

pseudocodeluminosity = (0.2126 * red + 0.7152 * green + 0.0722 * blue) * alpha / 255

I forgot to mention also, these methods of luminosity approximation are scaled between 0-255 - very convenient!

A really nice optimisation we can do also is completely rewrite this formula to use avoid floating point arithmetic. Floating point arithmetic is naturally going to be expensive, especially when we are doing millions of these calculations for a single image.

We get something like this:

pseudocodeluminosity = (2126 * red + 7152 * green + 722 * blue) * alpha / 2550000

Note that in the source code, the implementation is slightly different because Go stores RGBA values in a uint64 (so 16 bits for each channel). The implementation will differ as to account for going from 256 values to 65536 values

Step 3: Edge Detection Preparation

This is where it gets exciting. We use a couple of tricks to detect edges.

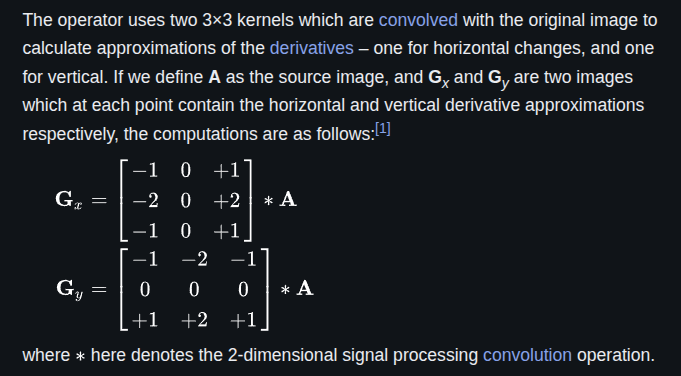

The first thing we do is use what is called the Sobel Kernel, which is a pair of 3x3 convolutionary kernels that is applied to all pixels in the downscaled image (except for the sides and corners).

Sobel Kernel Definition

Sobel Kernel Definition

For more details, check out wikipedia

Gx and Gy are approximations for the change in luminosity in the x and y directions respectively.

We can calculate the "magnitude of the change in luminosity" (i.e. the strength of the edge) by calculating |G|.

pseudocode|G| = sqrt(Gx*Gx + Gy*Gy)

An edge is detected if |G| is bigger than some threshold (that can be customised)

Because square root is expensive, we will just calculate the Sobel magnitude squared:

pseudocodeGmag2 = Gx*Gx + Gy*Gy

We can also calculate the Sobel Gradient which will be used later for determining what sort of curvature is happening locally around a pixel:

pseudocodeGgrad = Gy/Gx

We could stop here, and in fact, in my testing, I found that this implementation was pretty good. However, I found that sometimes edges would double up. It's hard to explain, but I would be essentially getting doubly thick edges spanning at least 2 characters thick. This result looks very off, especially when dealing with such low resolution (natural for ascii art).

Instead, we will calculate another scalar which I will call the Laplacian Value. You can calculate it by using a convolutionary kernel in a similar fashion to how we found the Sobel scalars:

Go// Here is some documentation I wrote a while ago on the Laplacian in the source code /* <-- Laplacian --> The purpose of laplacian is to finding true edge location. The laplacian is sensitive to rapid intensity bends. For our purposes, it helps mitigate doubled edges from edge detection. i.e. we use sobel to figure out the edge direction and strength, and we use laplacian to find the true origin of the edge. | 0 +1 0 | L = | +1 -2 +1 | | 0 +1 0 | However, this doesn't take into account the aspect ratio. Unlike the sobel operations, we will just normalize the value in place by using this kernel instead. | 0 1/aspect_ratio^2 0 | L = | +1 -(2 + 1/aspect_ratio^2) +1 | | 0 1/aspect_ratio^2 0 | Probably, we could optimise this better. For now it works great. */

Note that L defines the kernel we will use, and aspect_ratio represents the output aspect ratio (usually 2). We need to scale some values by the output aspect ratio as that going up by one character is not the same distance as going across by one character (ascii characters are usually 1:2 ratio in dimensions, so the output will be the inverse, 2:1)

Truth be told, we use a similar adjustment on the gradient to maintain accuracy

Step 4: Putting it all together

Okay awesome. We did a lot of preparation work up until this point. Now it is time to generate the ascii output.

Go/* ASCIIGenWithSobel converts a SobelProvider to ascii string. If you are not interested in making custom ascii generators, see Convert(), ConvertBytes() and ConvertReader() */ func (a *AsciiConverter) ASCIIGenWithSobel(sobelProv SobelProvider, aspect_ratio float64) string { // Approximate the threshold, accounting for character aspect ratio adjustedGMag2Threshold := int(a.SobelMagnitudeSqThresholdNormalized * (aspect_ratio * aspect_ratio)) width, height := sobelProv.Width(), sobelProv.Height() edgeMapper := a.EdgeMapperFactory(aspect_ratio) var bufferSize int // In most cases, we will overallocate by a few hundred // bytes to ensure there is no reallocation of the buffer // This is because it cannot be known how much // room should be left for the colour ANSI escape sequences bufferSize = int((a.BytesPerCharToReserve + a.AdditionalBytesPerCharColor) * float64(width + 1) * float64(height)) // width + 1 because leave a byte for the new line byte var asciiBuilder strings.Builder asciiBuilder.Grow(bufferSize) var prevWasBold bool = false var prevCode int = -1 var code = -1 var escapeStr = "" // Reset all styles before we write asciiBuilder.WriteString("\x1b[0m") for y := range height { for x := range width { code, escapeStr = a.ANSIColorMapper(sobelProv, x, y) // Get color if code != prevCode { prevCode = code asciiBuilder.WriteString(escapeStr) } // Check if we should use the edge or the luminosity mapper if sobelProv.SobelMag2At(x, y) >= adjustedGMag2Threshold && math.Abs(sobelProv.SobelLaplacianAt(x, y)) <= a.SobelLaplacianThresholdNormalized { if a.SobelOutlineIsBold && !prevWasBold { prevWasBold = true asciiBuilder.WriteString("\x1b[1m") // Make bold } asciiBuilder.WriteRune(edgeMapper(sobelProv, x, y)) } else { // Use the normal luminosity mapper if prevWasBold { prevWasBold = false asciiBuilder.WriteString("\x1b[22m") // Reset bold } asciiBuilder.WriteRune(a.LuminosityMapper(sobelProv, x, y)) } } asciiBuilder.WriteRune('\n') } // Reset all styles asciiBuilder.WriteString("\x1b[0m") return asciiBuilder.String() }

Here is the source code, I want to just take a look at inside the inner for loop.

First we check if the current character has a strong enough edge magnitude as provided by sobelProv.SobelMag2At(x, y).

We also check if the laplacian value is no bigger than the threshold specified.

If both of these checks pass, we will hand this logic over to the edge mapper, which will assign an "edge character" with some ascii character. By default, I think my implementation is pretty good, however, users of the API are totally welcome hook up their own implementation.

If the change in luminosity was not strong enough, then the logic is handed over to the standard luminosity mapper, where a pixel is given an ascii character purely based off its brightness.

As a note, we are also using a third "mapper function" called the "color mapper", but I don't think it is that worthwhile to talk about it.

Like usual, users of the API are free to implement their own color/luminosity/edge mapper

Extras: How the edge mapper works from a high level

Here is some extra information on how the default implementation of the edge mapper works.

If the current pixel is bright enough (based on luminosity) then we will assign it a special character using the sobel/laplacian scalars.

It primarily uses the sobel gradient to figure out what character to use.

For regular diagonal lines it will maybe use / or \, but for steeper lines, the function may return J or L.

Here is a list of characters used:

pseudocode|Ll\=/jJ|

In the source code, we have to usually deal with the inverse of the sobel gradient, because an edge with character

/actually has negative gradient. This is because the change in brightness is happening in a negative direction.

Final Notes

Anyways, the premise of this project is pretty simple, but I thought that the optimization techniques and some of the technical details that go into designing and developing a solution like this was pretty fun and interesting. AFAIK projects that implement ascii converters do not commonly use these techniques discussed, so I thought it was worth sharing.